I haven't written code in about a year.

Not in the traditional sense. I haven't opened a file, stared at a blank function, and typed out logic line by line. That workflow is over for me. And I don't think it's coming back.

What I do now is closer to directing. I describe what I want. I set constraints. I review what comes back. I course-correct. I ship. The models do the writing. I do the thinking.

This isn't a flex. It's just where we are.

The shift nobody's talking about honestly

Everyone's talking about AI in engineering. Most of the conversation is surface-level. "We're exploring AI tools." "We have a pilot program." That's not what's happening on the ground.

What's actually happening is a fundamental change in what it means to be an engineer.

I lead a product engineering team. A year ago we wrote code. Now we orchestrate agents. We define outcomes, feed context, review outputs, and iterate. The feedback loop went from days to minutes. We're shipping things in hours that used to take sprints.

And the people who are thriving aren't the ones who write the best TypeScript. They're the ones who can think clearly, describe problems precisely, and evaluate outputs fast.

The tooling is ready. Like, actually ready.

Let's be real about where we are.

Cursor changed the game. It went from "nice autocomplete" to a full agentic IDE. Plan mode lets you describe what you want at a high level and watch it reason through the implementation, plan the steps, and execute across your entire codebase. It's not autocomplete. It's a collaborator that understands your project.

And then there's Cursor Glass. Ambient context, always watching, always aware of what you're doing and what you need next. This is where the IDE is heading: not a text editor with AI bolted on, but an AI-native environment where the editor is secondary to the agent.

Claude Code is writing production-quality software. Not snippets. Full features. Multi-file refactors. Complex debugging sessions where it reasons through the problem better than most engineers would. Anthropic understood something others didn't: the model needs to live inside your workflow, not sit behind a chat window.

The infrastructure for agentic engineering is here. The question is whether you're using it or watching from the sidelines.

Early adopter vs laggard: the gap is compounding

Here's what I've noticed leading a team through this transition: the benefits of adopting AI-native workflows compound. And so does the cost of waiting.

Teams that started early have built intuition around prompting, agent orchestration, and AI-assisted review. They've developed workflows that multiply their output. They've learned where the models fail and how to compensate. That knowledge compounds every single week.

Teams that are "waiting to see" are falling behind in ways they can't easily measure. It's not just about speed. It's about the muscle memory of working with AI. You can't download that overnight.

This is a classic early-adopter dynamic, except the stakes are higher. In previous tech shifts you could catch up. You could migrate to the cloud a few years late and be fine. This one moves faster. The 10-person team using agents properly is outshipping the 100-person team that's still debating whether to allow AI tools.

Slow orgs are going to get eaten

I say this with zero dramatic intent: large organizations that are slow to adopt AI-native engineering are going to get outcompeted by small teams that move fast.

We're already seeing it. Startups with 3-5 engineers shipping products that would have required 20+ a year ago. Solo founders building and launching SaaS products in weekends. The leverage AI gives to small, fast-moving teams is unprecedented.

Meanwhile, enterprise orgs are forming AI committees, writing policy documents, running pilot programs, and scheduling review meetings about whether engineers should be "allowed" to use AI tools.

By the time they decide, the market moved.

What I've seen work

At Native Teams I've been pushing the team toward this for over a year. Here's what actually worked:

Trust the models more than feels comfortable. The instinct is to double-check everything, to not rely on AI output. That instinct is expensive. The models are good enough now that the default should be trust, with spot-checks. Not suspicion with occasional acceptance.

Make AI the default, not the exception. Every new feature starts with an agent. Every bug investigation starts with context fed to a model. Every code review is AI-assisted. It's not a tool you reach for. It's the environment you work in.

Hire for judgment, not just skill. The best engineers on my team aren't the fastest coders. They're the ones who can evaluate AI output critically, catch subtle issues, and redirect agents effectively. That's a different skill set than traditional engineering.

Ship vibe-coded solutions when appropriate. Not everything needs to be artisanal hand-crafted code. Some things just need to work. AI lets you prototype and validate at a speed that changes the economics of building software. We've shipped mobile apps, internal tools, and customer-facing features this way.

Where this is going

I think we're about 12-18 months away from a point where not using AI-native engineering is as conspicuous as not using version control. It'll just be the way software is built.

The engineers who will thrive are the ones who are building that muscle now. Who understand agentic patterns. Who can orchestrate multi-step AI workflows. Who know how to set up guardrails and evaluation loops.

The ones who are waiting for it to "mature" or "prove itself" are going to find themselves playing catch-up in a game that rewards compound experience.

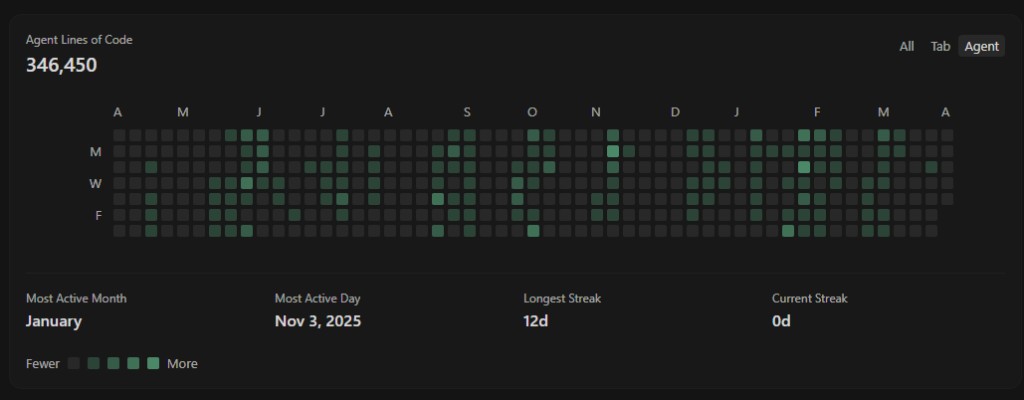

I'm not saying this to sound smart. I'm saying it because I've lived it for a year and the results speak for themselves. My team ships faster than ever. I build side projects in days that would have taken months. The quality hasn't dropped. If anything, it's gone up because I spend my time on architecture and product thinking instead of syntax.

The shift is here. The only question is whether you're leading it or getting dragged into it later.

- D